Bridging Resourcefulness and Semantic Understanding

Weeknotes 331 - New LCMs, semantic AI vaporware, and triggering human resourcefulness. What is happening in our relation with AI? This and a look back at the news of last week.

Hi all!

What did happen last week?

Let me see, what is worth mentioning here? I made draft proposals to share with consortium partners, finalized the Civic Protocol Economy report, had meetings with nice people, and worked on the Wijkbot Kit (aka Robot perspective). Also, we sent out the responses to the proposals for the ThingsCon RIOT publication after evaluating, and reach out to all exhibitors for the next Salon and exhibition on Generative Things. This week, I will update the website with all the details! It was nice to discover the mention in Handboek Publiekwijs. And the op-ed of Laurens mentioned someone that could be me :)

Yesterday I attended a session on futuring in systemic co-design. What are the models to jump out reality? Embedding it in organisational interplays with the future, is one of the conclusions. First questions were asked, needs for deeper exploring.

What did I notice from the news last week?

The long list was quite long this week, longer than usual.

- There was a message earlier about the delay of real intelligent features in Apple Intelligence. John Gruber wrote an opinion piece that got traction. Hope Apple will get things right on time.

- Google was impressed with Gemma 3 and got a lot of attention for its robot AI. And backlash on the fact Gemini can see your search history.

- Amazon is just capturing everything you say. Echoing becoming real.

- SXSW Interactive has ended, and less than in earlier years, I felt an overall vibe. The lack of political critiques was remarkable and worrisome. Is it indifference or fear?

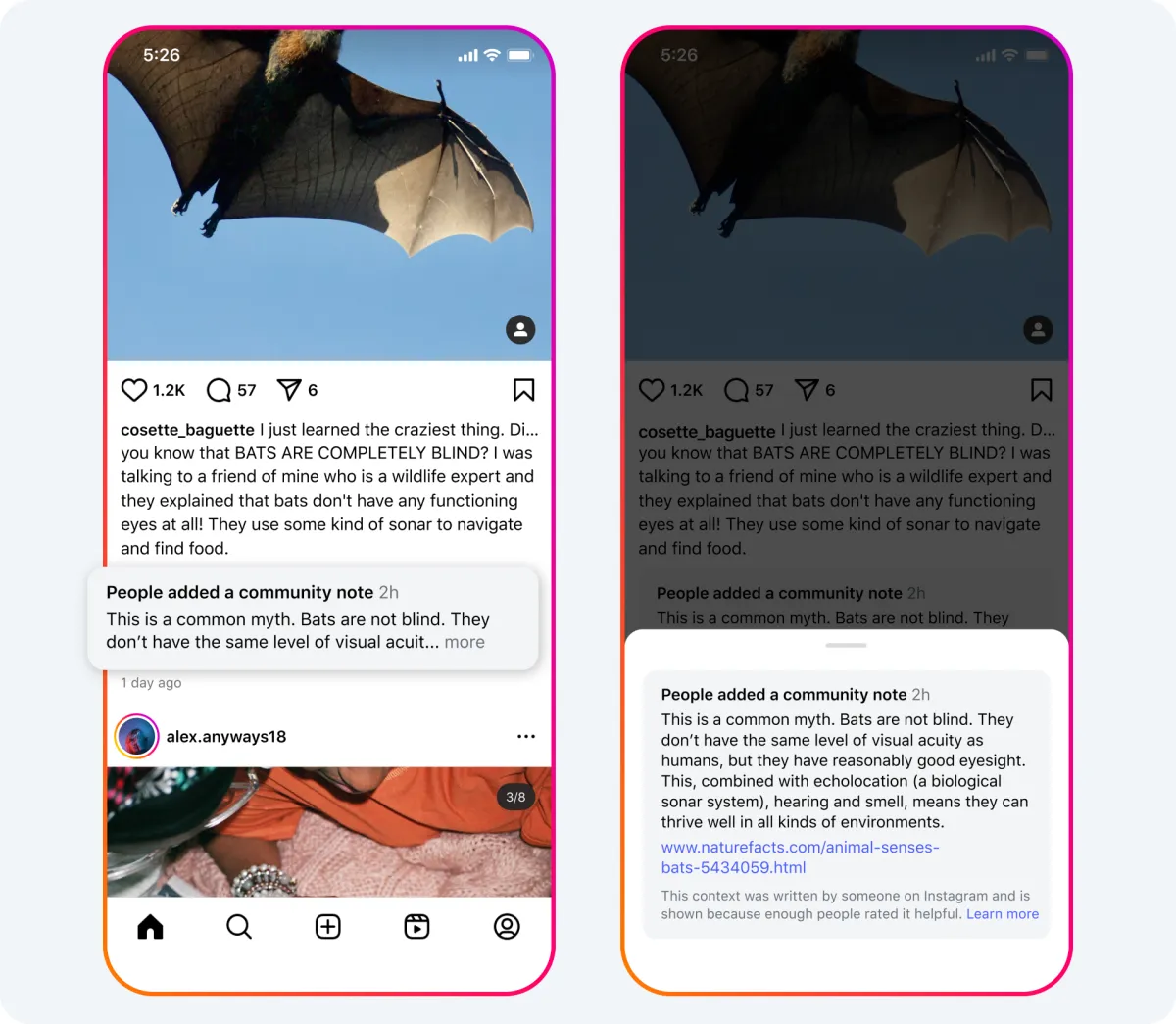

- Community notes are now at Meta. A sign of the times.

- There seems to be a fork in the road regarding whether to focus AI on consumer-facing media or vibe coding for personal computing. I expect vibe coding to stay hot in the coming weeks.

- Manus still got attention as people started to use it more and more.

- How will this become Public Intelligence?

- And much more, see below.

What triggered my thoughts?

In the rapidly evolving landscape of artificial intelligence, we find ourselves at a crossroads where human ingenuity meets machine capability. It was the topic here before, but I was triggered by a piece on the next generation of LCM (Large Concept Models), and the mentioning of Semantic AI in a piece on Apple Intelligence vaporware. It made me think about another project way back, on Resourceful Aging.

Beyond Language Models: The Promise of Semantic Understanding

The AI community appears to be shifting from Large Language Models (LLMs) toward what some are calling Large Concept Models. This transition represents signals a fundamental change in approach. While LLMs excel at generating creative language patterns, LCMs promise something more profound—an understanding of the world's semantic structure rather than merely manipulating words and phrases.

This evolution reminds me of Apple's unfulfilled promise of "Semantic AI" at last year's developer conference. Positioned as a cornerstone of Apple Intelligence, this semantic capability has largely failed to materialize. This echoes earlier conversations around Web3, back in the years before web3 was about tokens and DAOs, which was envisioned as a "semantic web"—a network that understood meaning rather than just connecting data points.

Human Resourcefulness: Our Unique Advantage

Parallel to these technological developments, I've been reflecting on human resourcefulness—our remarkable ability to repurpose, reimagine, and adapt our surroundings to serve new functions. Seven years ago, the project "Resourceful Aging" explored this quality, specifically among elderly individuals with limited physical capabilities. The researchers observed how seniors ingeniously repurposed everyday objects to overcome challenges, then attempted to capture these insights using AI to inform design solutions.

What makes this approach particularly compelling is that it didn't attempt to replace human resourcefulness but rather to learn from it. The AI served as a bridge between ingenious human solutions and scalable design implementation.

Machine Resourcefulness as a Catalyst

What if we reconsidered our understanding of resourcefulness itself? While we typically view resourcefulness as an inherently human trait, emerging AI systems display their own forms of adaptive problem-solving that, while different from human approaches, can be remarkably effective. These machine-generated solutions often follow unexpected patterns—combinations and applications that human minds might never consider due to our inherent biases and cognitive limitations.

This machine resourcefulness, when presented to humans, can serve as a catalyst for our own creative thinking. When an AI suggests an unconventional approach or makes connections between seemingly unrelated domains, it can jolt us out of established patterns and trigger new pathways of thought. The machine doesn't need to perfectly mimic human understanding to be valuable; its very otherness becomes an asset.

In our complex, constantly changing world, the most exciting frontier may not be machines that understand us perfectly, but machines that can surprise us productively. Large Concept Models might eventually offer both semantic understanding and a unique form of machine resourcefulness that complements and stimulates our human creativity. Rather than merely serving as tools that extend our capabilities, these systems could become genuine thought partners that challenge us to see problems differently.

The future of human-AI interaction may thus be less about partnership in the conventional sense and more about creative provocation—AI systems that don't just solve our problems but help us reimagine them entirely. As machines develop their own brand of resourcefulness, they might become not just amplifiers of human thinking but transformative influences that expand the boundaries of what we consider possible. This is in the end what vibe coding is about.

What inspiring paper to share?

Felienne Hermans had a nice piece in a Dutch newspaper on techbro-culture. She referenced an earlier paper of hers on the topic.

In this paper, we present feminism as a philosophical lens for analyzing the programming languages field in order to help us understand and answer the motivating questions above. By using a feminist lens, we are able to explore how the dominant intellectual and cultural norms have both shaped and constrained programming languages.

Felienne Hermans and Ari Schlesinger. 2024. A Case for Feminism in Programming Language Design. In Proceedings of the 2024 ACM SIGPLAN International Symposium on New Ideas, New Paradigms, and Reflections on Programming and Software (Onward! '24). Association for Computing Machinery, New York, NY, USA, 205–222. https://doi.org/10.1145/3689492.3689809

What are the plans for the coming week?

This week I will have a similar week as last week, with time to update some communications on Cities of Things, writing proposals and catching up with people. I might check a meetup on sustainability on Service Design on Wednesday if the waiting list is shortened. The talk on Probing Futures - co-designing change for tomorrow at the same timeslot is interesting too, but for me similar to the Esc event on Monday.

I intend to check the demo against fascism/racism in Amsterdam this Saturday.

See you next week!

References with the notions

Human-AI partnerships

AI, as in Apple Intelligence, is talk of town. Delays on the integrated AI promises.

Google has new things with Gemini visual tools and smaller models. Deep Search has a different meaning, looking into your history. Still, AI search is unreliable.

Agentic and other AI upgrades of existing services with Zoom, OpenAI, Snap,

Kevin Kelly used to have a world brain as model, and adds now the concept of Public Intelligence.

Is our mental capacity eroding? A nice topic for the next bd party.

Vibe coding this week. Is that contributing to the Jevons Paradox after all?

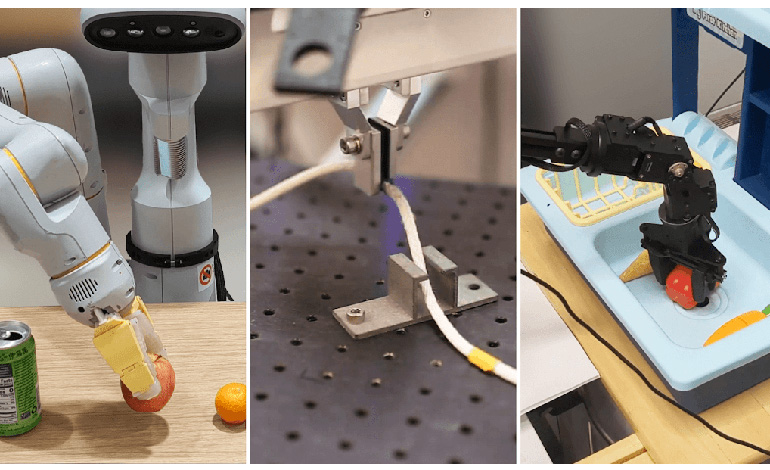

Robotic performances

Googles has a Robotic AI. Is a ChatGPT moment for robotics near?

Issues with the home robotics OG

New moves.

Immersive connectedness

Starlink is triggering competition

Immersive connectedness should be extended with immersive intelligence.

New futures, new applications

This will be the immersive aesthetics

Tech societies

The new black is agentic and reasoning models combined.

Community notes are the excuses for taking responsibility

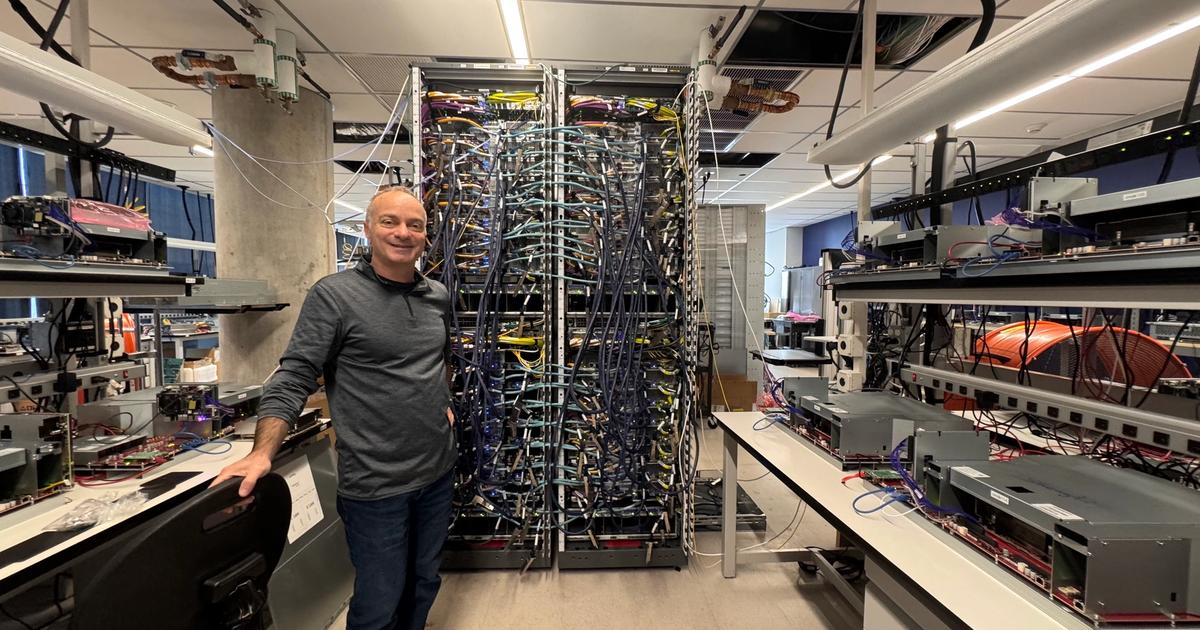

In the end it comes down to physics.

Bigger ownership politics. Web3 is far away.